Blockchain basics

These illustrations are meant to help explain Blockchain fundamentals. The original (Bitcoin, or Timechain) whitepaper, as written by the illustrious Satoshi Nakamoto, forms the basis for these drawings.

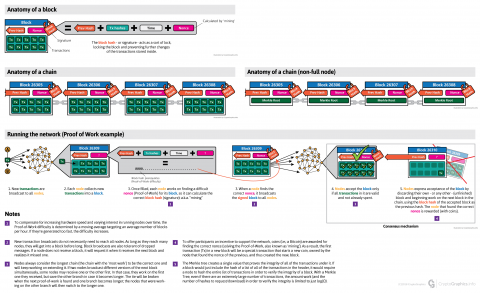

The block

The block is a container for simple A -> B style transactions, which are each signed by both respective parties (e.g. A and B).

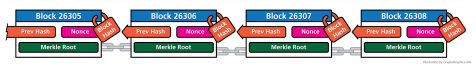

The block hash – or signature – acts as a sort of lock, locking the block and preventing further changes of the transactions stored inside. It is created by using the previous block hash, the hashes of the transactions contained inside, the block’s creation time, and a random string: the nonce. See Proof-of-Work for more detail on this process.

See notes 6

The chain

The chain is formed by all blocks linked in sequence. A new block is appended (mined) roughly every 10 minutes (see Proof-of-Work) and uses the block hash of the previous block to create its own block hash. This strong form of linking makes the blocks increasingly harder to corrupt as more blocks are added on.

The non-full node version of the chain

A non-full node runs on a ‘light’ blockchain copy, in which – instead of all the transactions – each block only contains the Merkle Root (see ‘Running the network’ below). This provides a way to verify the entire blockchain, without requiring the body of every transaction in the block header.

See notes 5

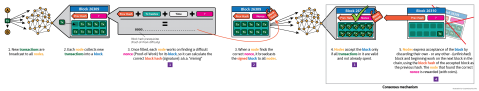

Running the network

Notes

- To compensate for increasing hardware speed and varying interest in running nodes over time, the Proof-of-Work difficulty is determined by a moving average targeting an average number of blocks per hour. If they’re generated too fast, the difficulty increases.

New transaction broadcasts do not necessarily need to reach all nodes. As long as they reach many nodes, they will get into a block before long. Block broadcasts are also tolerant of dropped messages. If a node does not receive a block, it will request it when it receives the next block and realizes it missed one.

Nodes always consider the longest chain to be the correct one and will keep working on extending it. If two nodes broadcast different versions of the next block simultaneously, some nodes may receive one or the other first. In that case, they work on the first one they received, but save the other branch in case it becomes longer. The tie will be broken when the next proof-of-work is found and one branch becomes longer; the nodes that were working on the other branch will then switch to the longer one

To offer participants an incentive to support the network, coins (i.e.; a Bitcoin) are awarded for finding the correct nonce (solving the Proof-of-Work, also known as ‘mining’). As a result, the first transaction (Tx) in a new block will be a special transaction (example here) that starts (a new) coin(s), owned by the node that found the nonce of the previous, and thus created the new, block. This block reward is halved, in Bitcoin’s case, every 210.000 blocks. This is a hard-limit, dictated by Bitcoin’s code (

Consensus.nSubsidyHalvingInterval = 210000). At the time of writing, this reward is 12.5 and will be halved to 6.25 around june 2020.The Merkle Root is a single value that proves the integrity of all of the transactions under it. If a block would just include the hash of a list of all of the transactions in the header, it would require a node to hash the entire list of transactions in order to verify the integrity of a block. With a Merkle Tree (of which the root is the final result), even if there are an extremely large number of transactions, the amount of work (and the number of hashes to request/download) in order to verify the integrity is limited to just log(O).

- Originally, the block is a container of roughly 1 MB, containing ~ 2.000 simple A -> B style transactions.